Davos 2026: Data Centres, AI Diffusion, and Nuclear Power

In the alpine town of Davos, Switzerland, global leaders, corporate executives, venture capitalists, hedge fund managers, and academics from the fields of business, technology, and policy convene each January for the World Economic Forum, where they shape agendas at the global, regional, and industry levels. The Forum positions the annual meeting as a five-day platform for dialogue and collaboration, rather than a formal policymaking body, thereby illuminating areas where capital and political will are beginning to align.

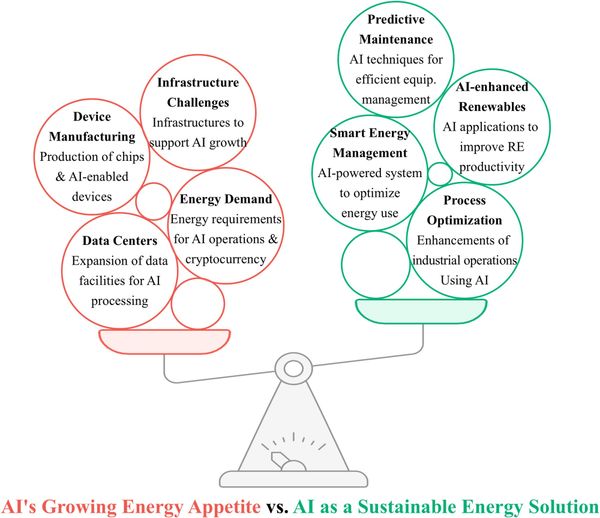

This year, that alignment once again centered on artificial intelligence. What distinguished Davos 2026 however was a change in emphasis. Among many of the most influential builders and financiers of AI, discussion strayed from model benchmarks and new capabilities, and toward the greater question of deployment: whether grids, data centres, semiconductor supply, institutional preparedness and governance structures can scale quickly enough to support the systems being built.

The question was no longer just about innovation, but about the foundational adequacy of the supporting system. Could the physical, economic, and institutional foundations of the world scale with sufficient speed, discipline, and prudence to sustain the demands of this historical transition?

The top industry leaders demonstrated this shift. The CEO of Microsoft Satya Nadella emphasized AI diffusion, grid and data-centre capacity, and the institutional changes required for deployment at scale. Nvidia CEO Jensen Huang described AI less as a single technology than as a layered infrastructure system, extending from energy and semiconductors to cloud platforms, models, and applications. In that framing, competitive advantage depends not merely on excellence at one layer, but on the coordinated scaling of the entire stack. Demis Hassabis of Google DeepMind and Dario Amodei of Anthropic, by contrast, concentrated more directly on the trajectory of advanced AI itself: AGI timelines, safety, controllability, governance, and the broader social consequences of systems whose capabilities may eventually exceed present institutional assumptions.

Other themes ran alongside this infrastructure turn: renewed interest in nuclear power as a potential source of firm energy for the new economy, and the growing costs associated with data-centre expansion. We will discuss each one here.

Davos 2026 underscored the concept: the advent of artificial intelligence is now not just confined by model design, but by infrastructure, energy and geopolitics.

Data Centres and the Shift to Infrastructure

When AI was primarily a research problem, the relevant actors were labs, universities, and a handful of well-capitalised startups. When it becomes an infrastructure problem — the actors switched. Utilities, sovereign wealth funds, semiconductor foundries, and anyone who controls land, water rights, or permitting authority are now as central to AI's trajectory as the engineers writing the code, or more accurately now refining the code that AI outputs.

The competitive dynamic has lapsed from being about who has the best model to who controls the territory those models run on.

Control of compute capacity has become analogous to control of oil reserves in the 20th century

Overreaching Value Distribution

Most optimistic growth projections were accompanied by an implicit caveat: productivity gains from AI are not just a bonus, but a requirement. The emerging consensus was not just that AI can generate a tremendous surplus in economic value, but that it must do so in ways that are visible, measurable, and broadly distributed.

Otherwise, the industry risks losing the societal mandate to continue scaling. Satya Nadella articulated this most directly, nonetheless the concern was broadly raised: if the benefits of AI accrue primarily to technology firms while the costs like energy consumption, environmental strain, and labor displacement — are externalized across communities and public systems, political but mostly public tolerance for continued expansion will continue to erode to much greater levels.

Below is the discussion on AI diffusion, with the CEO of BlackRock (not to be confused with BlackStone, which owns QTS — one of the major datacenter operators in the world) Larry Fink asking Satya Nadella. “Can you describe how this process of diffusion across economies, across companies, across people, and countries? How does that play out?”

“The zeitgeist is a little bit about the admiration for AI in its abstract form or as technology. But I think we, as a global community, have to get to a point where we are using it to do something that changes the outcomes of people and communities and countries and industries,” Nadella said. “Otherwise, I don’t think this makes much sense, right? In fact, I would say we will quickly lose even the social permission to actually take something like energy, which is a scarce resource, and use it to generate these tokens, if these tokens are not improving health outcomes, education outcomes, public sector efficiency, private sector competitiveness across all sectors, small and large. And that, to me, is ultimately the goal.” — Satya Nadella

Nadella continues on with explaining that process. The latter however, are very real costs, and the leaders in Davos were, to their credit, not pretending otherwise.

On data centres the resource consumption has moved well beyond an environmental footnote. According to the International Energy Agency (IEA), global electricity demand from data centres is projected to more than double later this decade, reaching approximately 945 terawatt-hours — slightly more than Japan's entire current annual electricity consumption. In advanced economies specifically, data centres are projected to account for more than 20% of all electricity demand growth by 2030.

Beyond electricity, the water demands of data centre cooling introduce a compounding pressure, driven by the substantial thermal output of high-performance AI processors. This is particularly acute in regions already navigating freshwater scarcity. AI data centres reportedly use more water in a year now than the amount of bottled water people drink globally annually.

While some estimates suggest that data centre water consumption may rival or exceed major categories of global water use, such comparisons should be treated with scepticism. Reliable quantification remains difficult, as many technology companies do not publicly disclose detailed water usage specific to their AI operations.

But for instance, a typical conversation with an LLM like ChatGPT (10-50 queries) can use up to 50 millilitres of fresh water, roughly one water bottle. One can then only imagine how many millions upon millions of gallons of water are used by the day. Then with new data centre projects being announced every week (the US alone has over 4000 AI data centres and counting), and then with many hundreds of data centres under construction around the world momentarily, one can conceive the statistics underway for 2030.

the environmental costs of data-centre growth not only relates to water consumption, but pollution in water systems as well, especially where wastewater handling is poorly controlled. Nitrogen compounds such as nitrates are highly soluble and can move readily through local water supplies, farmland and groundwater. Nitrates however are not inherently harmful in every context, as they occur naturally in soil and vegetables, and can support nitric-oxide production in the body. High concentrations in contaminated water though raise a different set of concerns.

Under certain conditions in the body, they contribute to the formation of carcinogenic and teratogenic N-nitroso compounds, where their spread through wastewater can inflict broader risks on both public health and agricultural ecosystems.

More extensively, the chemical implication associated with large cooling systems is not limited to nitrates. Depending on the design of the facility and its water-treatment systems, cooling operations can also involve biocides, PFAS, heavy metals, corrosion inhibitors, antiscalants, antifouling agents, and glycols used in heat-transfer and freeze-protection systems. Some of these substances are necessary for operational reliability, but if discharge, blowdown, leakage, or wastewater handling are poorly managed, they can introduce many more risks.

You cannot build megastructures of this scale and simultaneously argue that their environmental and social footprint is a secondary concern. The leaders who understood this at Davos were the ones at least making the more credible case — insisting that the benefits must grow large and diffuse enough to justify them.

However, the notion of minimizing these costs to zero through better and more innovative AI architectures as well as cleaner data centre optimization systems remains an imperative area of exploration that must be deciphered in rapid time.

There already exists a plethora of things that degrade the environment. Projections for the future are looking dimmer as each year passes. It is our moral obligation to stand in solidarity and safeguard our world substantially more, as technology accelerates and we reach even greater heights of interconnectedness.

Now with AI expansion, our actions now will matter most, indeed more than any other period.

There are eras defined by discovery, and others defined by consequence, ours will be defined by both.

"We have a single mission: to protect and hand on the planet to the next generation." — Francois Hollande, former President of France, Davos 2015

Energy Limits and the Return of Nuclear Power

The energy problem at Davos 2026 also surfaced a conversation that would have seemed fringe even just a few years ago: nuclear power, and the uranium supply chains that feed it.

A dedicated nuclear session drew government representatives from the United States, Czech Republic, India, and the United Kingdom, with the consensus framing a departure from the renewable-first orthodoxy that has dominated energy policy discourse for a decade — nuclear as the only dispatchable, low-emissions baseload capable of meeting AI's compounding, around-the-clock power demands.

The corporate sector had already begun acting on that conclusion well before the forum convened. Microsoft's twenty-year power purchase agreement for the restarted Three Mile Island reactor in Middletown, Pennsylvania (site of the worst commercial nuclear accident in US history) — capable of powering between fifteen and twenty hyperscale data centres at full consumption, had already set the basis, with Meta at the same time announcing the expansion of three nuclear facilities and the reopening of an Illinois reactor.

At Davos, uranium developers were making an argument: if technology firms intend to anchor the AI build-out in nuclear power, they may eventually need to move upstream and help secure uranium supply in the same way automakers once moved to secure battery minerals before the EV boom. The implication is that data-centre investment is now a question of fuel security as well.

The AI infrastructure race is slowly becoming a uranium story, and the companies that recognize that earliest will have secured a supply-chain advantage that no amount of software optimization can replicate.

Electrical power, Elon Musk argued, is the single binding limit on AI deployment in the United States. Very soon, the industry will be producing more chips than it can physically turn on. His pointed comparison was China, which is deploying over 100 gigawatts of solar capacity per year — building the energy foundation for AI infrastructure at a pace that US tariff policy and grid inertia are currently making difficult to match.

Technological and Societal Integration

Alongside infrastructure and energy, a third limitation is becoming increasingly visible: governance.

The EU's AI Act moves from partial to broad applicability on August 1, 2026 — less than seven months out. Frontier labs are now operating inside a compression cycle: capabilities in coding, reasoning, and autonomous action are improving faster than institutions can adapt, but regulatory obligations are arriving on fixed deadlines regardless of readiness.

Hassabis and Amodei both alluded to this dynamic, albeit in different ways — capability acceleration shortens the window for societal adjustment, but slowing down isn't an option when competitive dynamics and geopolitical pressure are both pushing the other direction. Safety and governance are no longer abstract principles you address after the technology matures, but they're real constraints you navigate while the technology is still moving.

Conclusion

Davos 2026 evidently didn't provide definitive solutions to these issues. Artificial intelligence is entering a phase where its trajectory will be determined not just by algorithmic breakthroughs, but by infrastructure capacity, energy availability, and societal acceptance. Competition is like never before and the resource requirements are intensifying.

The urgency of these challenges demands immediate and sustained engagement, as the trajectory of increasingly powerful AI systems and societal integration hinges on our collective capacity to respond effectively.

"How did you do it? How did you evolve, how did you survive this technological adolescence without destroying yourself?" — Dario Amodei on his essay, "The Adolescence of Technology, citing a scene from Carl Sagan’s Contact, in which a representative asks alien visitors.

As someone enthralled by the realms of ML and global politics, it is increasingly clear that we are advancing far into uncharted territory, different from anything else in history. Alongside the shift in focus towards AI infrastructure, more powerful AI systems are also on the horizon, and increasingly so without someone in the loop.

The World Economic Forum’s 2025 report says employers expect large job disruption this decade from technology shifts. Being largely due to AI; Amazon's layoffs of 16,000 employees, the Blocks 40% reduction in its workforce, and also Oracles elimination of 30,000 employees, is all only the start. A moment may arrive during this 5th Industrial Revolution — suddenly and without warning (which do happen in AI research labs), when a breakthrough emerges that reshapes the structure of almost everyones daily life itself, for better or for worse.

History has shown how rapidly the world can transform, as it did during the onset of COVID-19; the trajectory of AI holds the potential for change of equal, if not greater, magnitude. The imperative, then, is preparedness, coming from knowledge, foresight, and execution.

While I do not claim to possess all the answers, I know enough of human nature to recognize that our response to such moments will matter as much, if not more, than the technology that precipitates it.

Through artificial intelligence, humanity will attain a velocity once reserved for gods, perhaps without first acquiring the discipline necessary to wield it.